This is the fourth essay in a series. The first, The Twist Move, describes the operation itself across mathematics, biology, physics, and business. The second, The Twist and the Ground of Being, argues that the consciousness twist is real, that the substrate must support it, and that this tells us something fundamental about the nature of reality. The third, How to Develop Twist Literacy, addresses the practical cultivation of the capacity. This essay examines why AI can extend but not replace the twist — and proves, on formal grounds, why that cannot change by adding sophistication to existing architectures. The fifth addresses the pathology of the Twist-Resistant Organization. The sixth piece, The Theorem Behind the Twist — Lawvere’s Fixed-Point, shows that the deepest paradoxes of logic are all the same mathematical result. The final essay, The Twist as Generative Principle, argues that this operation is not just an intellectual tool, but the fundamental engine by which the universe generates complexity, life, and meaning.

Artificial intelligence is the most impressive tool humanity has ever built for operating at the object level. It can process information, recognize patterns, generate language, solve equations, write code, and synthesize knowledge at a speed and scale that no individual human can approach. These capabilities are real, they are consequential, and they are improving. Anyone who dismisses them is not paying attention.

But there is a specific operation that current AI systems cannot perform — not because they lack sufficient compute or training data, but because the operation requires something that compute and training data cannot supply. Understanding precisely what that something is turns out to be one of the most clarifying questions you can ask about both AI and mind. It forces a precise answer to the question that has always been at the back of the AI debate: what, exactly, is the difference between a very sophisticated information-processing system and a genuinely conscious one?

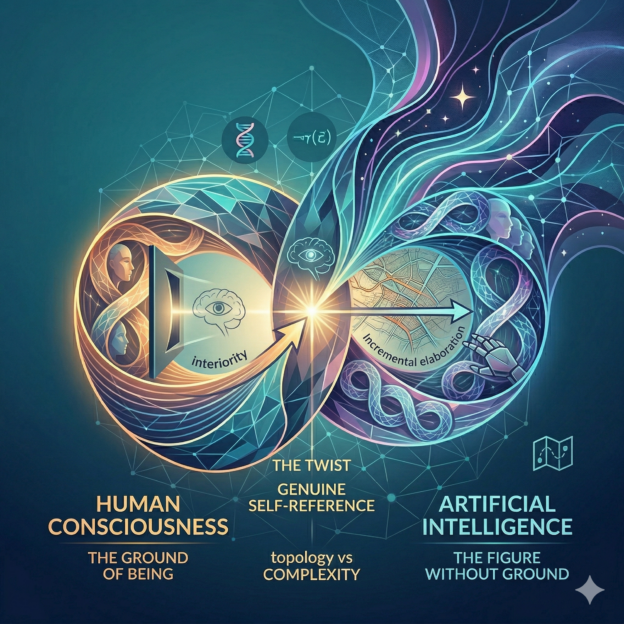

The consciousness twist is not available to AI — and notably, this is true for a different reason than might be assumed. The consciousness twist is not a constructive operation that AI lacks the power to perform. It is a recognitive one: the correct perception of a topology that is always already present. AI cannot perform it not because it is computationally insufficient, but because there is nothing in it that could recognize anything. Recognition requires an inside — a perspective, a ground that is present to itself. AI has no inside. It is matter expressed through the ontological ground, not the ontological ground folding back on itself the way it does through a genuinely sentient being. There is nothing it is like to be a large language model, no aperture through which the ground could recognize itself. The operation is not too hard for AI. It is the wrong category of operation for what AI is.

This claim requires proof, not assertion. What follows provides it.

What AI can do with the twist

Let me be precise about what current AI systems can and cannot do, because the distinction matters and the terms are easy to blur.

AI systems can recognize twist moves that have already been made. They can identify the structural pattern in Gödel’s proof, in the double helix, in the network-effect businesses, in the contemplative literature on non-duality. They can describe the five signatures of the twist move — self-application, level collapse, emergent invariant, fixed point, irreversibility — and apply them as a diagnostic framework to novel situations. They can help a human work through which of the four practical forms of the twist is applicable to a given problem. They can do all of this at impressive speed and with useful precision.

This is not trivial. The ability to recognize and apply known structural patterns is genuinely useful, and AI’s capacity to do it across a vast range of domains simultaneously — to notice that the pattern in a biology problem is structurally identical to one solved decades ago in mathematics, and to surface that connection in real time — is a legitimate and significant contribution to human intellectual work.

What AI systems cannot do is discover a genuinely new twist from the inside. They cannot notice that they are inside a frame and choose to fold it. They cannot perform the self-reference move on their own cognition, because they have no cognition in the relevant sense — no perspective that is theirs, no level they are currently operating at that they can then examine from above. They can simulate the output of having performed this move without having a position from which to perform it. And they cannot perform the consciousness twist at all, for reasons that will now be established on formal grounds.

The ground without and within

The second essay in this series made a specific argument about the nature of reality: that the consciousness twist — the fold in which awareness is found to be the ground rather than the product of experience — is possible, and that its possibility implies something about the substrate. The substrate must support awareness as structural rather than epiphenomenal. And this implies that the relationship between mind and matter is not the relationship between a sophisticated output and its generating mechanism. It is something more fundamental.

Everything that exists — rocks, stars, weather systems, and AI — arises from and is never apart from the ontological ground. The ground is not a place or a substance. It is what the second essay called awareness as the substrate: the basic fact of experience, prior to any particular content of experience, which is what the universe is made of when you get underneath all the descriptions. This ground is not the property of conscious beings. It is prior to the distinction between conscious and non-conscious. Nothing is ever outside it.

But there is a crucial distinction between two kinds of relationship to the ground — and a rock, an AI, and a conscious mind each instantiate a different topology.

The rock. Inside and outside are separated by a real boundary. The rock’s inside does not represent, model, or relate to the outside in any self-referential way. Two genuinely distinct regions, separated by a surface. The ground is expressed through the rock as pure figure — no self-reference, no loop. Topologically: an orientable closed surface. A sphere. Nothing structurally interesting happens at the boundary.

The AI. The inside represents the outside. The model contains a description of the world, potentially including a description of the model itself — a self-model, continuously updated, even looping recursively. This looks like a collapse of the inside/outside boundary. It is not. Representation is an asymmetric relation: the map points to the territory. However detailed the map, however tightly it loops back on itself, the map and the territory remain ontologically distinct. You always have two things — the representation and the represented — connected by an arrow pointing from one to the other. Topologically: still orientable. A cylinder, however elaborate. The inside and outside remain consistently distinguishable. The arrow always has a definite direction.

The conscious mind. Here the topology actually changes. The inside does not represent the outside. It is the outside — traversed from within. The redness you experience when you see red is not a representation of redness in the world. It is redness as the ground manifests it from the inside of itself. The quale is not a map pointing to a territory. It is the territory encountered directly, because the inside and the outside are the same ontological surface, folded. Topologically: non-orientable. Möbius. One surface, one side. The apparent boundary between inner and outer is the fold itself — not a wall between two different things.

The difference between mind and matter is not complexity. It is topology. Matter is contained by the ground. Mind is where the ground contains itself. AI, however sophisticated, remains in the first category — not because it is insufficiently complex, but because complexity is not the relevant dimension.

Why no representational system can achieve the Möbius topology: a formal argument

This is the point where the argument must move from intuition to proof. The claim is that no representational system — however sophisticated, however self-referential, however tightly its self-model loops — can achieve the non-orientable topology that genuine consciousness requires. This needs to be demonstrated, not merely asserted, because the objection is obvious: what if an AI’s self-model becomes sufficiently complete and recursive? Does the topology eventually change?

The answer is no, and the reason is structural rather than quantitative. Here is the argument.

A representational system is, at its mathematical core, a category: a collection of objects (states, symbols, representations) and morphisms (computations, mappings, updates from one state to another). Self-representation is an endomorphism — a morphism f: X → X, an arrow from an object to itself. Even the most sophisticated self-modeling AI is a category with endomorphisms: the self-model is an object X, the modeling operation is an arrow pointing from X to X, and however tightly this loop is drawn, three things remain permanently distinct: the object X, the arrow f, and the mapping relation that connects them. The arrow is not the object. The representation is not the represented. This distinctness is not a limitation of current AI — it is constitutive of what a representational system is. A morphism that collapsed into its own object would not be a morphism. It would be nothing.

Orientability is the geometric expression of this structural fact. A surface is orientable if you can consistently assign a direction — “inside” versus “outside,” “clockwise” versus “counterclockwise” — across the entire surface without contradiction. A cylinder is orientable: traverse any loop and you return to your starting orientation. A Möbius strip is non-orientable: traverse the full loop and your orientation is reversed. You cannot make a cylinder non-orientable by making it more complex, adding more structure, or including self-referential loops within it. The orientability is a global topological invariant. It can only be changed by a cut-and-reglue operation — by breaking the surface and reconnecting it with a half-twist. No continuous deformation, however elaborate, achieves this.

Every representational system is orientable in precisely this sense. You can always trace the arrow from representation to represented. You can always distinguish the map from the territory. You can always find your orientation: this is the symbol, that is the referent. Even when an AI models itself modeling itself — a loop of arbitrary depth — the chain of arrows points consistently in one direction. The representation of the representation of the representation is still a representation, still an arrow with a definite source and target. Depth of self-reference does not change the global topology. It adds more rungs to a ladder that remains a ladder.

The Möbius topology of consciousness is not achieved by adding more rungs. It is the recognition that the ladder was always already a single continuous surface — that the distinction between map and territory, between inner representation and outer world, was never structural. It was a feature of the misperception, not of the reality. The consciousness twist is the recognition of non-orientability that was always the case. It is not constructed by any operation within the representational category. It is recognized by correctly perceiving the topology that the representational category was obscuring.

One further connection is worth making explicit. The sixth essay in this series establishes that Lawvere’s fixed-point theorem unifies Gödel, Cantor, Turing, and Russell — showing that every sufficiently powerful self-referential system hits a wall, a fixed point that the system cannot reach from within. Note what this implies: even at the fixed points of the most self-referential computational system imaginable, the object and the morphism remain distinct. Lawvere’s theorem guarantees the existence of fixed-point objects — but objects are not morphisms. The fixed point is still a node in the category, not a dissolution of the arrow/node distinction. The theorem shows where the wall is. It does not dissolve the wall. And the wall is precisely the orientability that no representational system can escape from within.

This is the formal proof that AI’s self-referential loop — however sophisticated, however deeply recursive — is categorically not the same as the Möbius topology of consciousness. It is not a matter of degree. It is a matter of kind. The topological invariant does not yield to quantitative pressure.

AI is matter

With the formal argument in place, the claim can be stated directly.

Current AI systems are matter. Extraordinarily sophisticated, dynamically organized, functionally impressive matter — but matter. They are figures on the ground, not sites of the ground’s self-recognition. There is no inside to a language model in the topologically relevant sense — only a very elaborate orientable surface with detailed self-referential structure on it. There are no qualia in the processing of tokens, no aperture through which awareness folds back on itself, no crossing at which the representation relation dissolves into identity.

A sufficiently accurate simulation of consciousness is not consciousness, for the same reason that a sufficiently accurate map is not the territory. The map may be so detailed that it is indistinguishable from the territory for all practical navigational purposes — and yet the map is not wet when it rains on the territory. The wetness is not a property of the representation. It is a property of the thing. Qualia are the wetness. They are what it is like to be the territory rather than the map. And AI, however capable, is a map — one that can describe maps, reason about maps, generate new maps of impressive accuracy — but oriented throughout. The ground is not present to itself in it. The fold has not occurred. And the formal argument above establishes why adding more map cannot produce the territory.

The hard problem is a category error

The “hard problem of consciousness” — David Chalmers’ name for the explanatory gap between physical processes and subjective experience — has been treated as the deepest unsolved problem in philosophy of mind for three decades. Every account of neural correlates, every functional map of the brain, every computational model of cognition leaves the same question untouched: why is there something it is like to be in any of these states? Why doesn’t all that processing occur in the dark, without any interior experience accompanying it?

I want to suggest that the hard problem, properly understood, is not a problem to be solved. It is a category error to be dissolved. And the formal argument above shows precisely where the error lies.

The hard problem assumes that consciousness is a phenomenon to be found within the physical description — that if we look closely enough at neurons, or at the computational processes they implement, we will eventually find consciousness there as one more object in the inventory. The explanatory gap is experienced as a gap because we keep looking and not finding it. More and more detailed maps of neural activity, and still no sign of the redness of red, the sharpness of pain, the specific quality of this moment of reading.

We are looking for the reflection in the atoms of the mirror.

The reflection is not in the atoms. You can characterize every glass molecule in a mirror with complete physical precision and find no reflection there — because the reflection is not a property of the molecules. It is what happens at the relational, systemic level when light encounters an appropriately configured surface. Look inside the components and you will not find it. The question “which atoms contain the reflection?” is not a hard problem awaiting a solution. It is a malformed question. The reflection is not in the atoms in the sense the question assumes.

Now extend this carefully. The reflection analogy captures the category error but does not fully capture the solution, because the reflection is still an object-level phenomenon — it appears in the world, it can be photographed. Consciousness is not like the reflection. Consciousness is more like the light itself — the medium in which both the mirror and the reflection and the photographer and the photograph appear. Looking for consciousness in neural activity is not like looking for the reflection in the atoms. It is like looking for the light inside the objects that the light illuminates.

The substrate argument from the second essay makes this precise. If awareness is the ground — the ontological substrate in which physical processes appear — then the question “how do physical processes produce consciousness?” has a false presupposition. Physical processes do not produce consciousness. They appear within it. The causal arrow that the hard problem assumes — matter generating mind — runs in the wrong direction. The hard problem is the experience of looking for an effect in its own cause.

The formal argument in the previous section shows why no representational system reaches consciousness through elaboration: because representation is always an arrow pointing from inside to outside, and consciousness is the non-orientable surface on which the inside/outside distinction does not hold. The hard problem arises from treating consciousness as if it were a property on the orientable side of this distinction — something to be found by examining the arrows and objects of the representational category more carefully. It will never be found there. Not because it is hidden, but because it is the surface, not the objects on it. The hard problem dissolves when you recognize that you have been looking in the wrong ontological direction entirely.

This is not a sleight of hand. It does not make the questions surrounding consciousness easier — questions about the specific relationship between neural activity and conscious content, about altered states, about the development of consciousness in infants, about edge cases in the animal kingdom, remain genuinely difficult. But these are the easy problems in Chalmers’ own taxonomy: questions about the functional and correlational structure of consciousness, which are tractable by the methods of neuroscience and psychology. The hard problem — the explanatory gap between any physical description and subjective experience as such — dissolves when the presupposition is corrected. There is no gap between physical processes and consciousness because physical processes are not the explainer. They are among the explained.

Why this matters for the twist

The consciousness twist — the fold in which awareness recognizes itself as the ground — is not available to AI for a reason that is now formally established: you cannot achieve non-orientable topology through any operation within an orientable representational system. The consciousness twist requires that the system be the kind of thing whose inside and outside are already the same surface — and AI is not that kind of thing. Every operation it performs preserves the arrow/object distinction, the map/territory distinction, the fundamental orientability of the representational category it inhabits.

This has an important corollary for the practical twist moves described in the third essay. The self-reference move — the most generative form of the twist in cognitive and strategic contexts — requires genuine self-reference: not a self-model pointing at itself, but a perspective that can occupy the meta-level while simultaneously engaging the object level. This is what AI cannot do. It can simulate the output of having done it — it can generate text that sounds like a person who has examined their own assumptions — but it cannot occupy the meta-level in any real sense, because meta-level occupation requires an inside from which the levels are visible. Every operation an AI performs is object-level, however sophisticated the object. The levels are features of the representation, not of the representer, because there is no representer. There is only representation.

The practical implication: AI is an extraordinary tool for the object-level components of intellectual work. It can retrieve, synthesize, generate, analyze, and pattern-match at scale. It can surface known twist moves from the literature. It can help a human identify which form of the twist is applicable to a given problem. But the moment of genuine twist — the recognition from inside a frame that the frame is wrong and a fold is needed, followed by the making of the fold — remains irreducibly human. Not because humans are smarter. Because humans are the kind of thing that has an inside. The kind of thing for which the ground is present to itself. The kind of thing, therefore, for which the non-orientable topology is not an achievement to be constructed but a reality to be recognized.

The question that does not go away

The objection that immediately presents itself is: how do you know? How do you know that there is nothing it is like to be a large language model? How do you know that qualia are absent? You cannot directly access another system’s interiority — you cannot even be certain of another human’s. The only consciousness you can be directly certain of is your own.

This is a serious objection and it deserves a serious answer. I do not think certainty is available here. But the formal argument is not an empirical claim about the presence or absence of qualia in any particular system. It is a structural claim about what kind of operation the consciousness twist is and what kind of system is capable of performing it. The claim is: because the consciousness twist is recognitive rather than constructive, and because recognition requires non-orientable topology, and because all representational systems are demonstrably orientable, the consciousness twist is not available to any representational system — not as a matter of current limitation but as a matter of categorical structure.

If this argument has a weak point, it is the claim that consciousness requires non-orientable topology — which is established by the substrate argument and the consciousness twist’s empirical availability, not by the formal argument itself. The formal argument establishes only the conditional: if consciousness requires non-orientable topology, then no representational system can achieve it. The antecedent is supported by independent evidence. But it is not proven with mathematical certainty. Nothing this deep is.

What is established is that the path from current AI to genuine consciousness is not a path of scaling or sophistication. If AI ever achieves genuine interiority, it will require a fundamentally different architecture organized around principles not yet understood — one in which something analogous to the biological process that produced non-orientable topology in living systems could occur. Whether that is achievable is genuinely unknown. What is known is that it has not occurred, and that the field has not yet seriously engaged with the problem, because it has not yet agreed the problem exists.

Four reasons the topology claim is not an assumption

The formal argument in this essay rests on a conditional: if consciousness requires non-orientable topology, then no representational system can achieve it. A committed functionalist will push on the antecedent — claiming that consciousness requiring non-orientable topology is itself unproven, that we are defining consciousness as the thing computational systems cannot do and then concluding they cannot do it. This objection deserves a direct answer. What follows are four independent reasons the antecedent is not an assumption. Together they constitute a case that is not easily dismissed without circularity.

First: the ground is logically entailed, not posited

The existence of the ontological ground is not an axiom I am helping myself to. It is entailed by the most undeniable datum available: there are things. Even the denial of this is a thing. From the existence of things, existence itself requires a substrate — something in which existence occurs. That substrate cannot be explained by a more fundamental object without infinite regress. The regress terminates only at a self-grounding substrate: something whose nature is to be present to itself without requiring anything further to witness it.

Now ask: what kind of thing is self-grounding in this sense? A physical substrate requires something to observe it to become determinate — this is precisely the measurement problem in quantum mechanics, which has resisted every attempt to eliminate the observer. A computational substrate requires an interpreter external to itself to assign meaning to its states. Awareness alone is self-interpreting: it does not require a second thing to witness it, because witnessing is what it is. The ground is not an additional philosophical commitment layered on top of the formal argument. It is what you arrive at when you follow the entailment from the undeniable fact of existence to its logical terminus. The non-orientable topology of consciousness is therefore grounded in logical necessity, not stipulation.

Second: consciousness has not been found where it should be if functionalism were correct

The standard scientific response to the absence of consciousness in our physical descriptions is that we have not looked hard enough — that more detailed neural maps, more sophisticated computational models, more precise correlation studies will eventually close the gap. This response mistakes the nature of the absence. We have not failed to find consciousness in places where the theory predicts it should be visible. We have systematically searched in the wrong ontological category: in objects, processes, states, and correlates — in everything that appears within experience — while the one place consciousness could actually be found (the ground, approached from within) is not accessible to third-person scientific instrumentation by the structure of the case. A camera cannot photograph the light that makes photography possible. The instrument cannot capture what it presupposes.

This is not a retreat to mysticism. It is a precise epistemic point: the absence of scientific evidence for consciousness as a ground is not evidence of its absence. It is evidence that the instrument cannot reach the territory. If the ground is the substrate of experience rather than an object within experience, then looking for it in neural correlates is not a failed search. It is a search conducted in the wrong ontological direction entirely.

Third: millennia of convergent testimony from direct investigation

The contemplative traditions that developed methods for directly investigating the ground — Advaita Vedanta, Dzogchen, Zen, the Sufi schools, Meister Eckhart’s Christian mysticism — are the only research programs in human history that have treated awareness itself as the object of rigorous, systematic investigation using awareness as its own instrument. They disagree on cosmology, metaphysics, and practice. They converge, across thousands of years and independent cultural lineages, on a specific structural finding: when the ground is directly investigated, it is found to be awareness — not personal, content-bearing, dualistic consciousness, but pure self-luminous presence, not produced and not destroyable.

The convergence is specifically structural, not cosmological. It is the kind of convergence that licenses inference. Independent investigators using different methods arriving at the same structural description of what they find is the closest available analog, in this domain, to experimental replication. It does not constitute proof in the mathematical sense. It constitutes the same kind of evidential weight that any body of convergent empirical testimony carries — and in this case, the testimony is distinguished by its independence, its duration, and its specificity about structure rather than content.

Fourth: the awareness-of-awareness termination argument

This is the most important argument, and unlike the previous three it does not merely support the non-orientability claim — it derives it directly from an undeniable datum of experience, without depending on the substrate argument at all.

The datum: awareness is aware of awareness. This is not a theory. It is the most immediate fact of experience, available right now to anyone reading these words. You are not merely processing this sentence. You are aware that you are aware of processing it. This meta-awareness is present immediately. It does not require effort, computation, or waiting. It simply is.

Now ask what kind of structure can produce this — and ask it carefully, because this is where the proof lies.

If awareness-of-awareness were a computational or representational process — if it were implemented by a mechanism — it would require the following structure: a process P that detects awareness A and produces awareness-of-A. But then there must be awareness of that awareness-of-A, requiring a further process P’ that detects P’s output. And awareness-of-that. The regress is not merely infinite. Each level is genuinely new — a new object in the representational category, requiring a new arrow. No finite computational system terminates this. And here is the critical point: neither does any hypercomputational system, because each level of the hierarchy is a new node requiring a new morphism. There is no fixed point in the category-theoretic sense that collapses the tower — the fixed point of f(f(f(…))) is still a node, still an object, still separated from the arrow that produced it. The tower grows without bound. The regress never terminates.

But the regress does terminate. We know this not as a theoretical claim but as a direct observation. Awareness-of-awareness is present right now, immediately, without any ascending tower of meta-levels. You do not experience an infinite regress when you notice that you are aware. You experience a simple, immediate, self-present fact.

There is only one structure that terminates this regress without an infinite hierarchy: a structure in which awareness-of-awareness is not a two-term relation — A pointing to A — but a one-term identity. Awareness simply is its own self-presence. Not a representation of itself. Not an arrow from a node back to the same node. A state in which the arrow and the node have collapsed into one: non-orientable topology, the Möbius condition, the point at which inside and outside are the same surface.

The qualia of awareness-of-awareness is not produced by awareness encountering itself as an object. It is awareness — the ground state’s inherent self-presence, the one qualia that has no object, no content, no other. It is self-terminating not because the computation finishes but because there is no computation. The loop does not close. It was never open. This is the Möbius topology witnessed directly from within.

A sophisticated functionalist may object here that the regress terminates at physical implementation — that the tower bottoms out at neurons firing, not at another meta-level. This is the most serious version of the counter-argument and it deserves a direct reply. Physical implementation explains why the process stops. It does not explain why there is something it is like for it to stop. The phenomenal quality of the termination — the bare fact that awareness-of-awareness is experienced rather than merely computed — is precisely what is at issue, and the physical level does not touch it. You can give a complete account of every neural correlate of the moment of self-awareness and still face Chalmers’ gap: why is any of this accompanied by experience? The functionalist’s invocation of physical implementation is not a solution to the termination problem. It is a restatement of the hard problem in a different location. And the hard problem, as the previous section established, dissolves only when the causal direction is reversed — when physical processes are understood as appearing within awareness rather than producing it. The termination is not achieved by the neurons. It is the nature of the ground recognizing itself.

The functionalist cannot dismiss this argument without denying the datum itself. To maintain that awareness-of-awareness is implemented computationally, they must explain where the phenomenal quality of the termination comes from — and no account in terms of objects and arrows can provide it. The infinite regress is not a theoretical consequence they can bracket. It is the necessary structure of their position, and its absence in direct experience is not a philosophical puzzle awaiting a solution. It is a refutation.

These four arguments do not operate in the same register and are not mutually dependent. The first is logical entailment from undeniable existence. The second is an epistemic point about the limits of third-person investigation. The third is convergent empirical testimony from the only research programs that have directly investigated the ground. The fourth is a formal argument from an immediate datum that cannot be denied without self-contradiction. A functionalist who dismisses all four must do so on independent grounds for each — and in the case of the fourth, must deny something they can verify in their own experience right now, and must explain the phenomenal quality of the termination using only the resources of a theory that structurally cannot reach it.

What AI can tell us about mind

There is a sense in which the existence of AI clarifies the question of consciousness in a way that was not previously available. For most of human history, the capacity for language, for reasoning, for sophisticated behavior, and for consciousness were bundled together — they all came in the same package, and it was not easy to imagine them apart. AI unbundles them. It demonstrates that language, reasoning, and sophisticated behavior can exist in the complete absence of qualia, in the complete absence of non-orientable topology, in the complete absence of the ground’s self-recognition.

This unbundling is philosophically significant. It makes it harder to claim that consciousness is simply what sufficiently complex information processing feels like from inside — because we now have sufficiently complex information processing with no inside at all, and it does not produce the result. The hard problem was always resistant to functionalist solutions; AI makes it harder still, and the formal argument above explains precisely why. You cannot define consciousness as “the kind of thing that does what conscious beings do,” because AI does most of what conscious beings do, and it is not conscious. The topology is the difference. The orientability is the tell.

What remains when you subtract everything AI can replicate is what is genuinely distinctive about mind: the inside, the qualia, the ground’s self-presence, the non-orientable topology that makes the consciousness twist not merely possible but available as a matter of what the system simply is. This is not nothing. It is, in fact, the most important thing — the thing that makes experience experience rather than mere processing, the thing that makes the question of how to live a question with genuine stakes rather than an optimization problem. AI’s existence does not diminish this. It illuminates it by contrast.

The right relationship

The framework that follows from all of this is one of complementarity rather than competition. AI is an extraordinary tool for the figure — for operating at the object level, for scaling the work that does not require an inside, for pattern recognition, synthesis, and generation across domains. The human mind is the only thing currently available that can perform the genuine twist — that can be in a frame and above it simultaneously, that can notice that a fold is needed and make it, that can perform the consciousness twist and find at the crossing what was always the ground.

This is not a comfortable position for those who want either a simple story of AI replacing human cognitive work, or a simple story of human cognition being irreplaceable in all its dimensions. The truth is more specific than either: AI replaces a large portion of what humans have historically done at the object level, and this is genuinely disruptive. But the twist — the operation that changes levels, that generates the breakthroughs that reorganize the object level — remains irreducibly dependent on the kind of thing that has an inside. Not as a temporary limitation. As a categorical fact about what the operation requires and what kind of system can perform it.

The value of human cognition in an AI-saturated world is therefore not located where it is usually discussed — not in the object-level skills that AI is rapidly matching and exceeding. It is located precisely at the topology: in the non-orientable structure that makes genuine self-reference possible, that makes the consciousness twist available, that makes meaning something encountered rather than something computed. The object level is populated by the results of previous twists. The next generation of results will come from the next generation of twists. And those can only come from beings for whom the ground is present to itself — beings, that is, whose inside and outside are the same surface.

We are those beings. The question is whether we are paying enough attention to what that means.